Generate

Tests from repos, docs, specs, and live apps — in minutes, not sprints.

Claude Code, Cursor, and Copilot write code faster than any team can verify it. QualityMax is the Generate → Gate → Heal layer that catches what breaks and what leaks — before it ships.

From repo import to live CI gate — under 90 minutes.

Tests from repos, docs, specs, and live apps — in minutes, not sprints.

Functional, security, and quality checks before every merge. Bad code doesn't ship.

When the UI changes, tests repair themselves. No maintenance debt, no flaky suite.

Attack your own chatbots for prompt injection, jailbreaks, and PII leakage before users do.

BUILT ON QA EXPERTISE FROM

Deutsche Bank

Deutsche Bank

Cisco

Cisco

CipherHealth

CipherHealth

Watch how AI generates, executes, and self-heals your tests — end to end.

The Questions Every Buyer Asks

The questions that stop deals — answered directly, no spin.

Self-healing rewrites broken selectors automatically. RAG-grounded generation means selectors come from what your app actually renders — not guesses. When the UI drifts, AI fixes the test without waking you up.

One repo import → 30+ structured test cases → CI gate. Steps, tags, priorities, and runnable Playwright — generated in minutes. Typical time from zero to CI-gated coverage: under 2 hours.

We route across Claude, GPT, and Gemini in parallel — not as a fallback. On April 15, 2026, Anthropic had a partial outage. Our customers kept shipping because two other models picked up the work.

QualityMax was built for dev-only teams. AI generates, runs, and heals tests. You review cases, not write selectors. Starter plan includes 1:1 concierge setup to get your first CI gate live.

Yes. The qmax local agent runs on your machine and reaches apps behind VPNs, firewalls, or on localhost. AI decisions happen server-side; the browser stays inside your network.

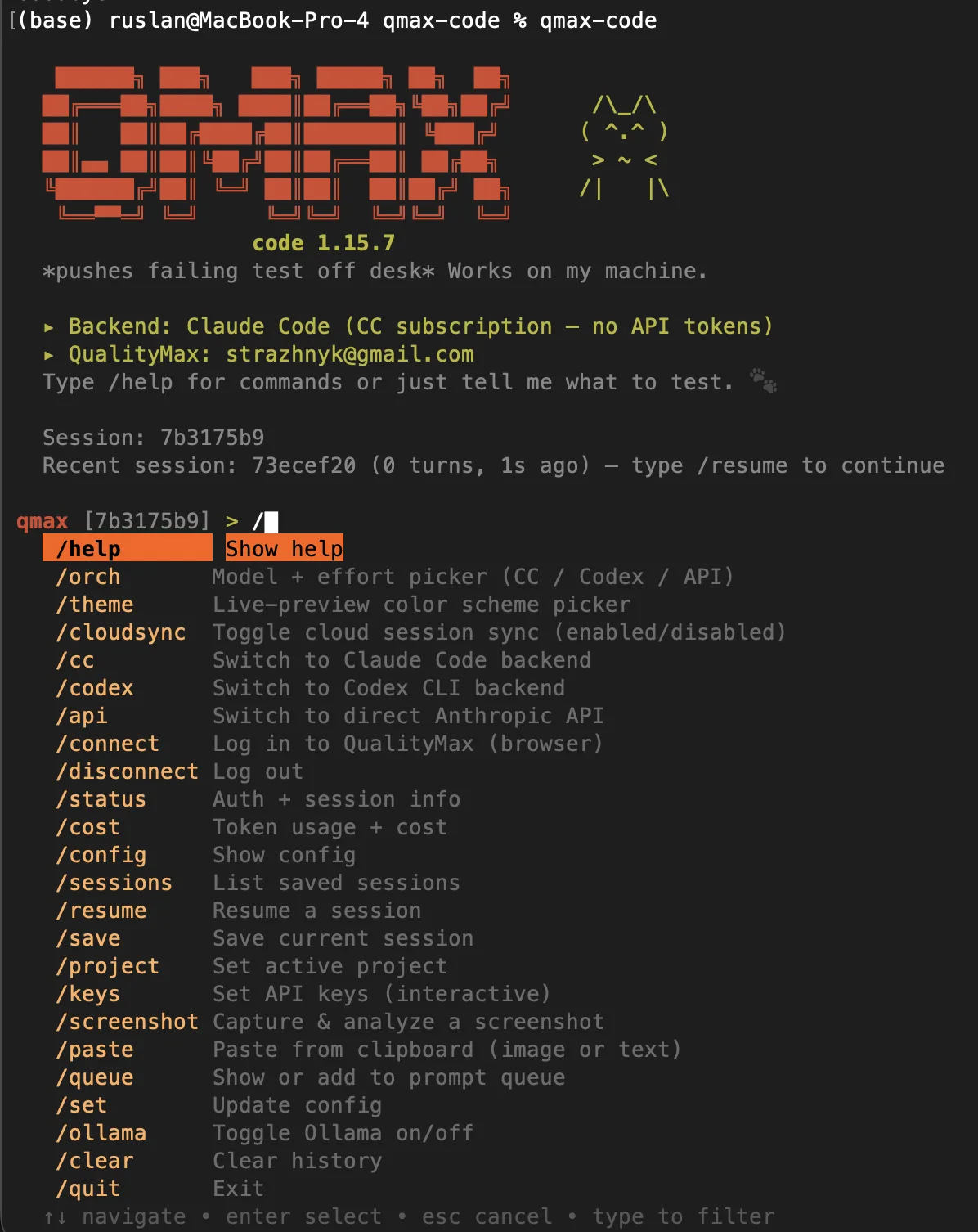

Never. Bring your own OpenAI, Anthropic, or Gemini keys on the Free plan. Or let us handle routing on Starter. We're the only test platform with no lock-in at the LLM layer.

We run 7,000+ tests on every commit to our own repo — through QualityMax. GitHub OAuth grants read-only access; we never persist your source code. Dedicated security workflows (SAST, dependency scanning, secret detection) run on every push. We dogfood our own security stack because we have to. Enterprise adds private deployment, SOC 2 audit trail, and SSO.

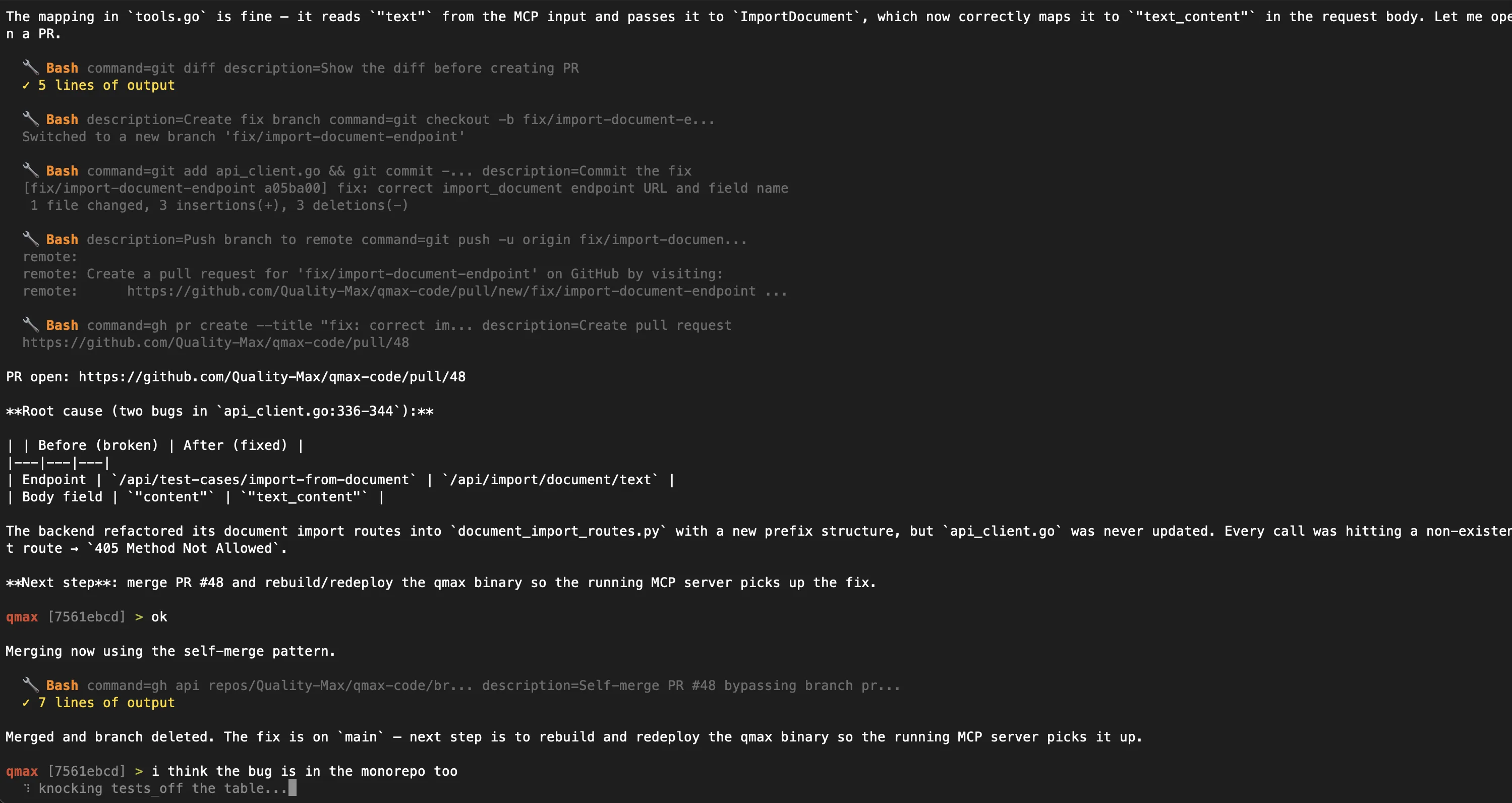

We do not replace Claude Code. We complement it, especially through qmax-code. Skill files write one test. QualityMax runs the loop. Claude Code has no persistent memory of your app's DOM, no CI trigger, no self-healing when a selector breaks at 3am, and no token optimization. Our RAG pipeline sends focused 800-token selector context instead of full codebases — test generation that costs ~$0.04 via direct prompt runs under $0.004 through our routing (functional tests from imported code; AI-crawl flows vary). The orchestration is the product.

You're already paying ~€1,100/month for tools that don't talk to each other. Mabl covers E2E but not security. SonarQube covers code quality but not browser tests. BrowserStack gives you devices but not AI generation. CodeRabbit reviews PRs but can't run a single test. QualityMax does all of it — E2E, API, security, performance, AI code review — from one import, one CI gate, one invoice at €79/month.

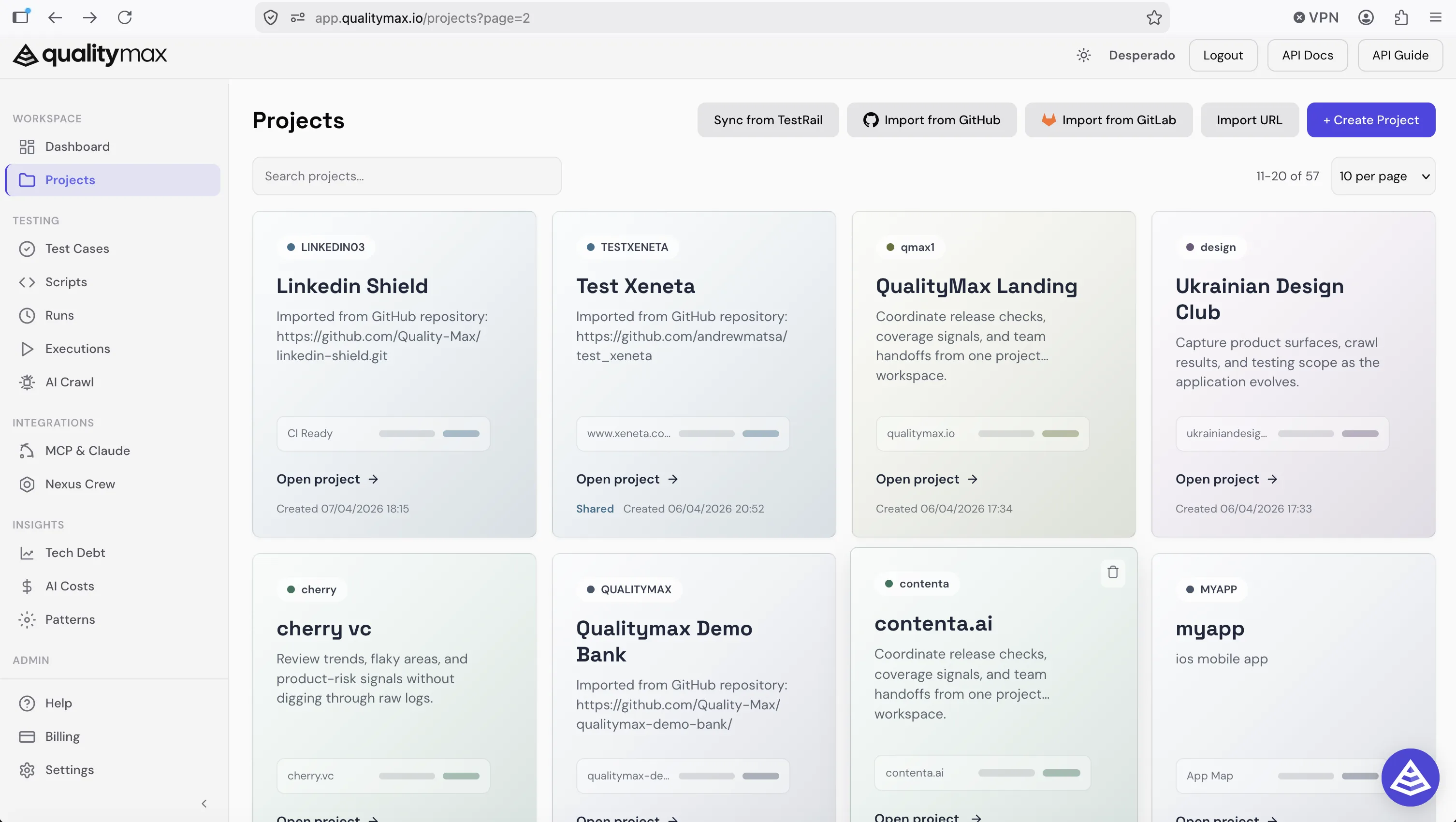

From repository import to production-ready test suites — everything your team needs in one place.

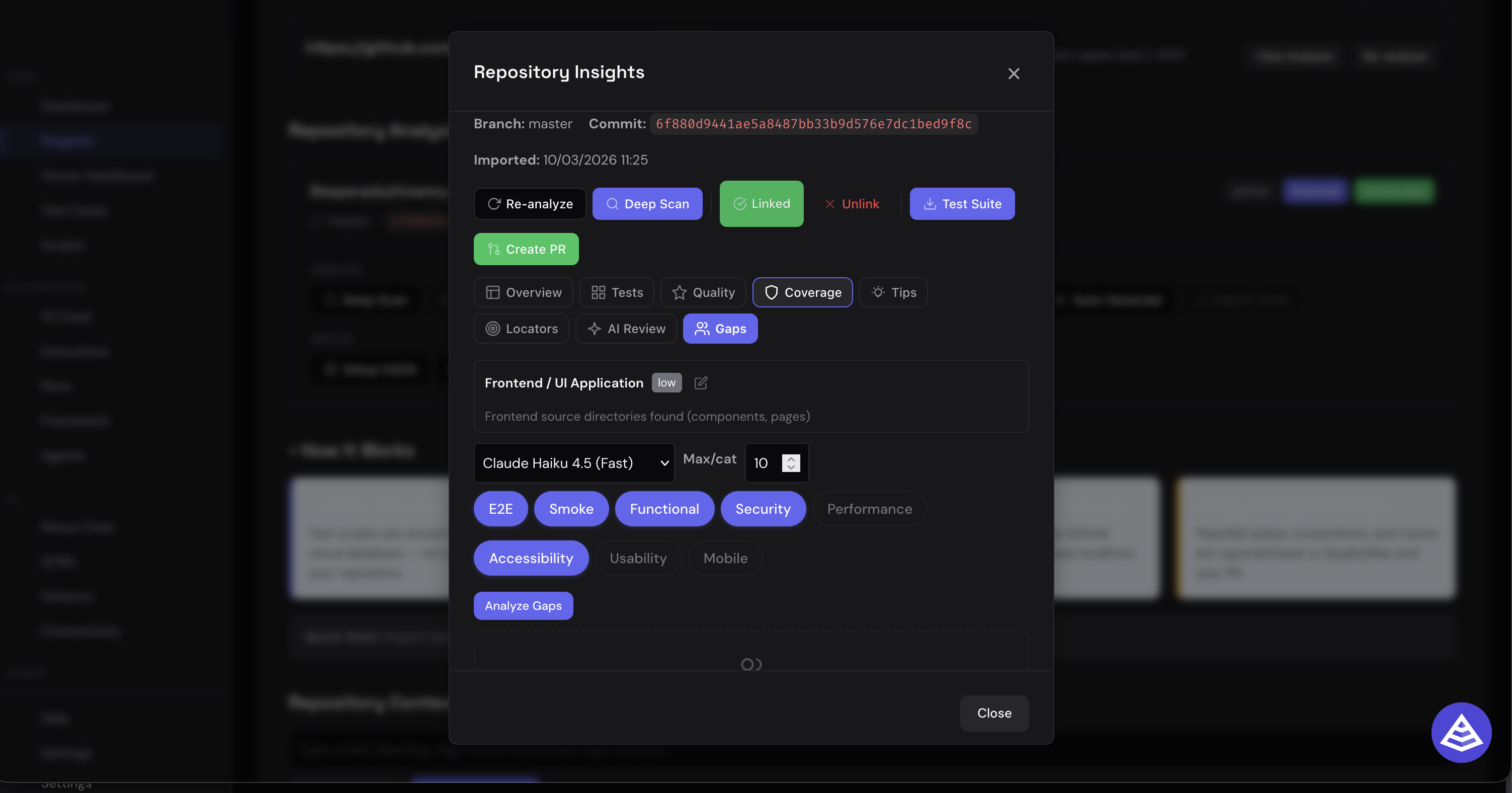

Import any GitHub repository. QualityMax analyzes your source code, discovers API endpoints, maps frontend components, and identifies coverage gaps — automatically.

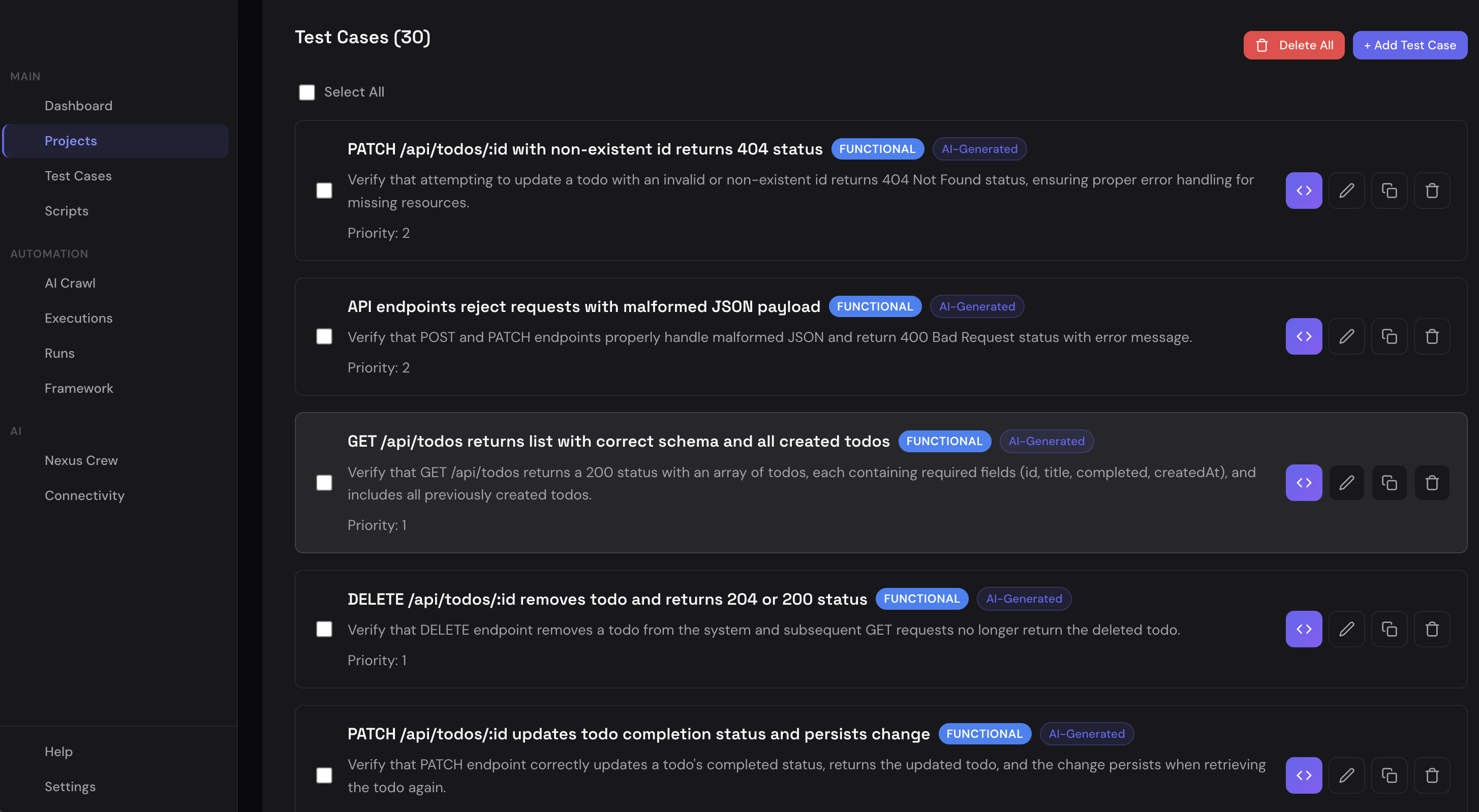

Generate 30+ test cases from a single repo import. Each test case includes structured steps, expected results, tags, and priority — ready for automation.

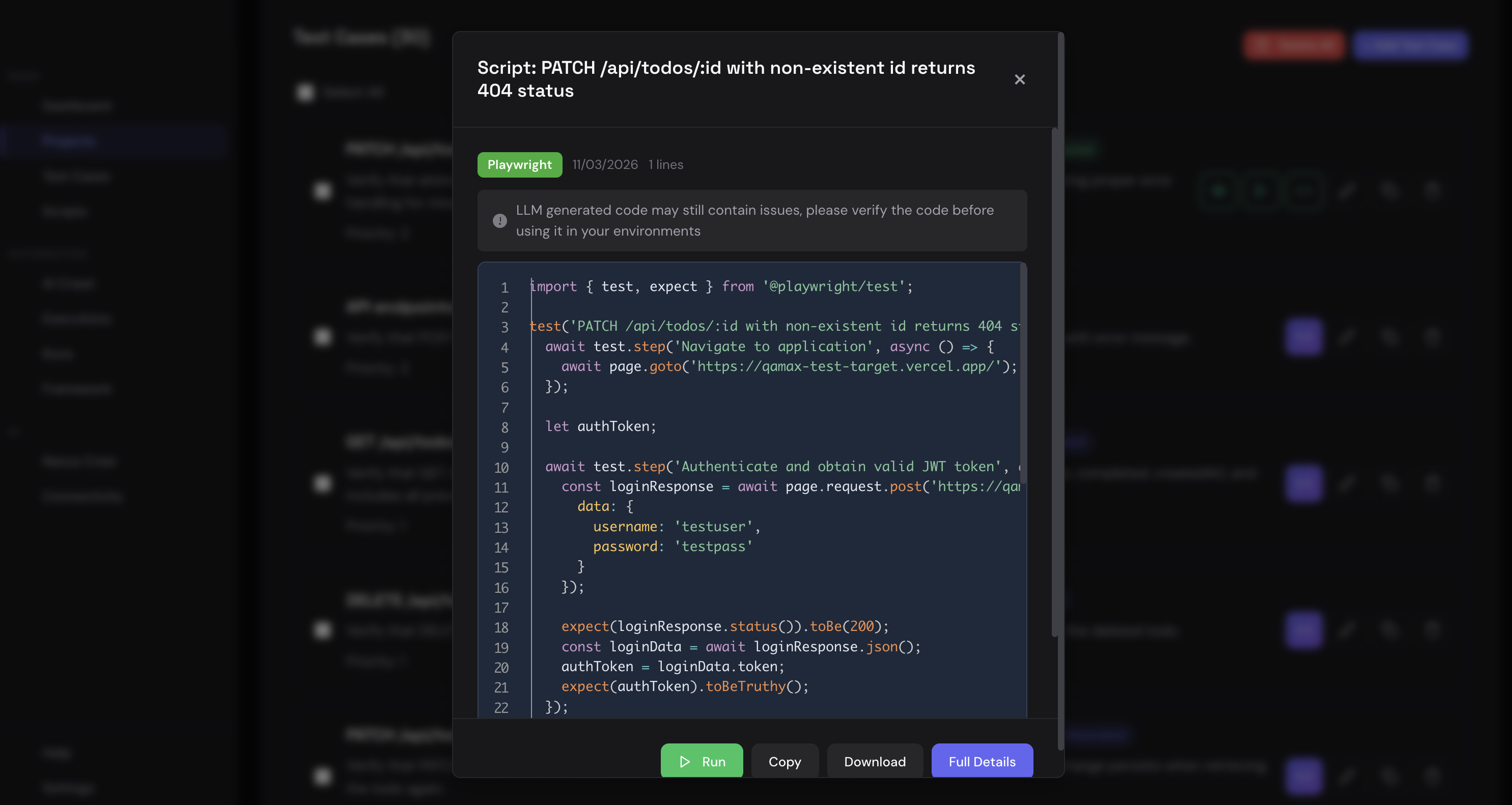

Generated Playwright and pytest scripts with real assertions, proper authentication flows, and API endpoint validation. Run, copy, or export instantly.

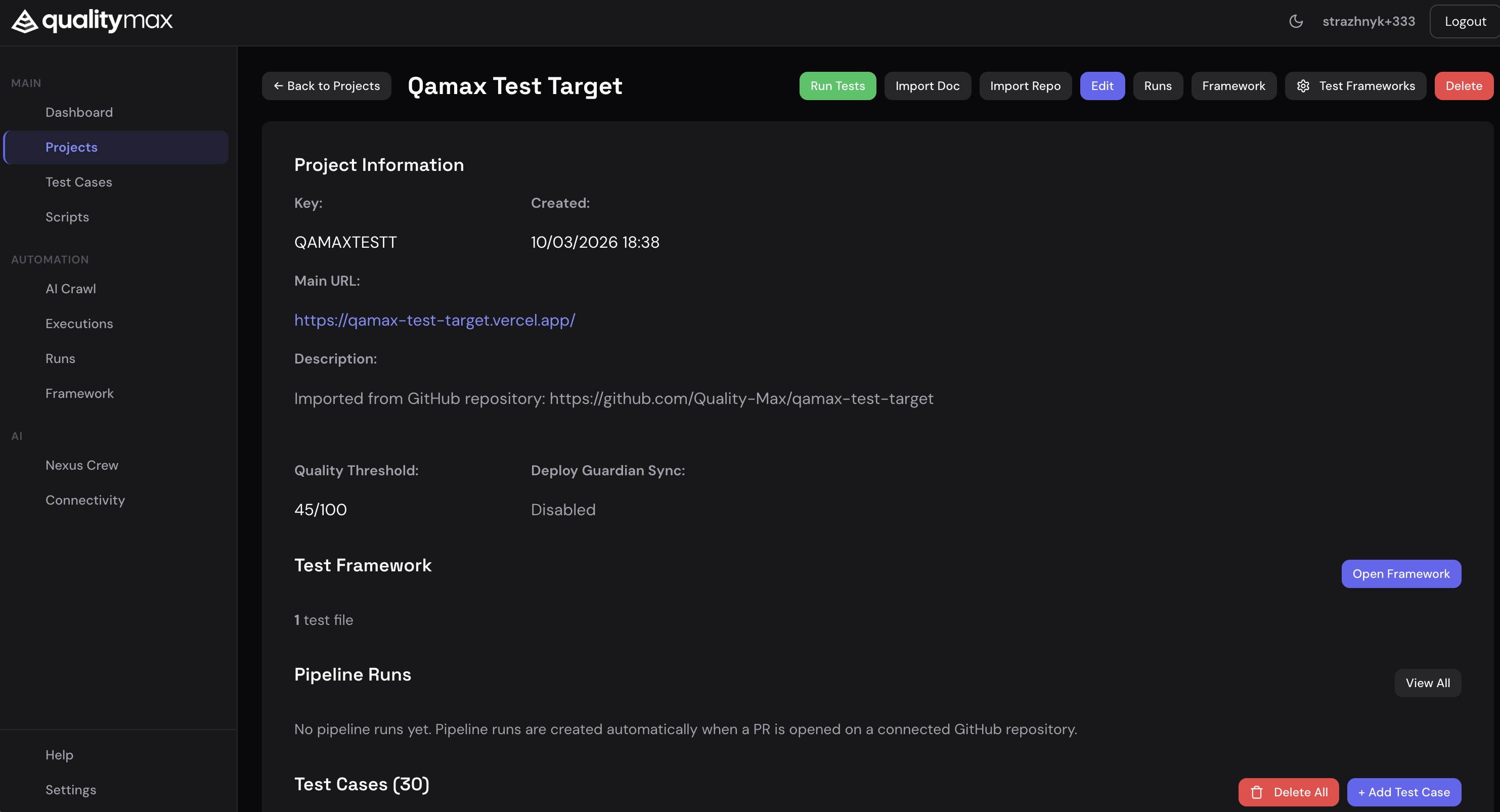

Each project brings together repository data, test frameworks, pipeline runs, quality thresholds, and deployment hooks — one unified view for your entire testing lifecycle.

The operational QA layer around AI-coded software: app maps, repo imports, terminal agents, PR review, security, performance, and CI gates in one system.

QualityMax now covers the surfaces teams actually use when shipping with Codex, Claude Code, and repository agents: project workspaces, qmax-code terminal workflows, pull request findings, and trend dashboards.

Import from GitHub, GitLab, TestRail, URL crawls, or docs. Each workspace keeps test cases, scripts, runs, site audits, performance, security, tech debt, costs, and patterns tied to the project that owns the release.

Run QualityMax through Codex, Claude Code, or direct API backends. Use commands for orchestration, project context, screenshots, queues, saved sessions, cloud sync, cost checks, and model switching without leaving the terminal.

QualityMax posts file, line, severity, why it matters, and a ready-to-use fix prompt for LLM agents. Security, logic, config, and persistence issues move from review noise to actionable repair work.

The agent can inspect root cause, show the exact diff, create a branch, commit the fix, push it, open the pull request, and hand off merge or redeploy steps. QA becomes an executable workflow inside the coding agent.

Run automatic scans on every pull request, track pass/warn/fail verdicts, inspect severity buckets, and keep recent scan history visible next to executions, security, patterns, and AI cost telemetry.

Describe correct behavior in plain language. QualityMax generates comprehensive test suites automatically.

Upload PDFs, Word docs, or spreadsheets. AI extracts test cases from specs in seconds.

Intelligent crawling maps your app and generates test scenarios, including edge cases humans miss.

Tests accumulate into a persistent Playwright framework. Helpers and page objects are shared across your suite.

Push to GitHub with auto-generated workflows. Tests run on every PR with Check Runs and comments.

Connect Vercel, Netlify, or any platform. Every preview deploy triggers automatic smoke tests.

Merge blocked until tests pass. Automated quality gates protect your main branch.

Intelligent adaptation keeps your quality gates working as your product evolves.

We don't bet your QA on one model's uptime. Claude, GPT, and Gemini routed per task — when one provider degrades, your tests keep running.

Full browser automation with screenshots, video, and real-time visualization of test runs.

Automated quality scoring across functional correctness, security, usability, and accessibility.

Test your AI chatbot for prompt injection, jailbreaks, PII leakage, and bias. 39 adversarial attacks with LLM-as-judge scoring. Free safety scan →

Every AI test platform ships on top of an LLM provider. When that provider degrades — and they do, often — the platform goes with it. QualityMax routes each task (crawl decisions, test generation, healing, review) across Claude, GPT, and Gemini. When one falls over, your tests keep running on the others. No vendor lock-in, no single point of failure.

Pictured → Anthropic status on 2026-04-15. Two services in partial outage, Claude Code degraded. Customers building on a single model felt the outage; ours kept shipping.

Founder Ruslan Strazhnyk on what 20 years in QA taught him about where automation breaks — and why zero-config testing on every PR changes the game for Go, Rust, Python, and Playwright teams.

Choose the plan that fits your team. Start free, scale as you grow.

For solo devs trying QualityMax. Bring your own LLM keys.

For teams shipping to prod every week. AI included, set up live in 48 hours.

For regulated orgs that need a dedicated team, compliance, and contracts.

Paste your chatbot URL. We'll attack it 39 ways and tell you what's broken. Free, 60 seconds, no signup required.

Scan Your Agent →